Early Tools of the Rails Developer

A primer in the ancient ways of Homo Techne.

March 9, 2019

This is Part 1 in our series about our Ruby on Rails DevOps stack. We are a small but mighty consultancy that maintains dozens of production Rails apps for our clients. This series will talk about how we manage to do that without going crazy. But first, the crazy…

We've been writing Rails apps for 14 years.

That feels like an eternity on the technology timeline.

When we started, Rails was little more than an exciting mail list. There could not have been more than a few hundred folks reading about what DHH was working on. We all saw the potential of the framework, and eagerly awaited the release of Rails 1.0 in December of 2005.

Our first "Hello World" Rails apps were exhilarating to write, and I believe that feeling persists today for folks who try Rails out for the first time. But getting those early apps to run outside of development was... well, not so exciting.

2005

In 2005 AWS was a only a twinkle in Jeff Bezos' eye. There were no VPS companies. Having a server for your web application often meant literally owning pieces of hardware. Our early clients invested tens of thousands of dollars into server hardware, huge monthly fees for co-location services, and paid for us to keep the servers up to date.

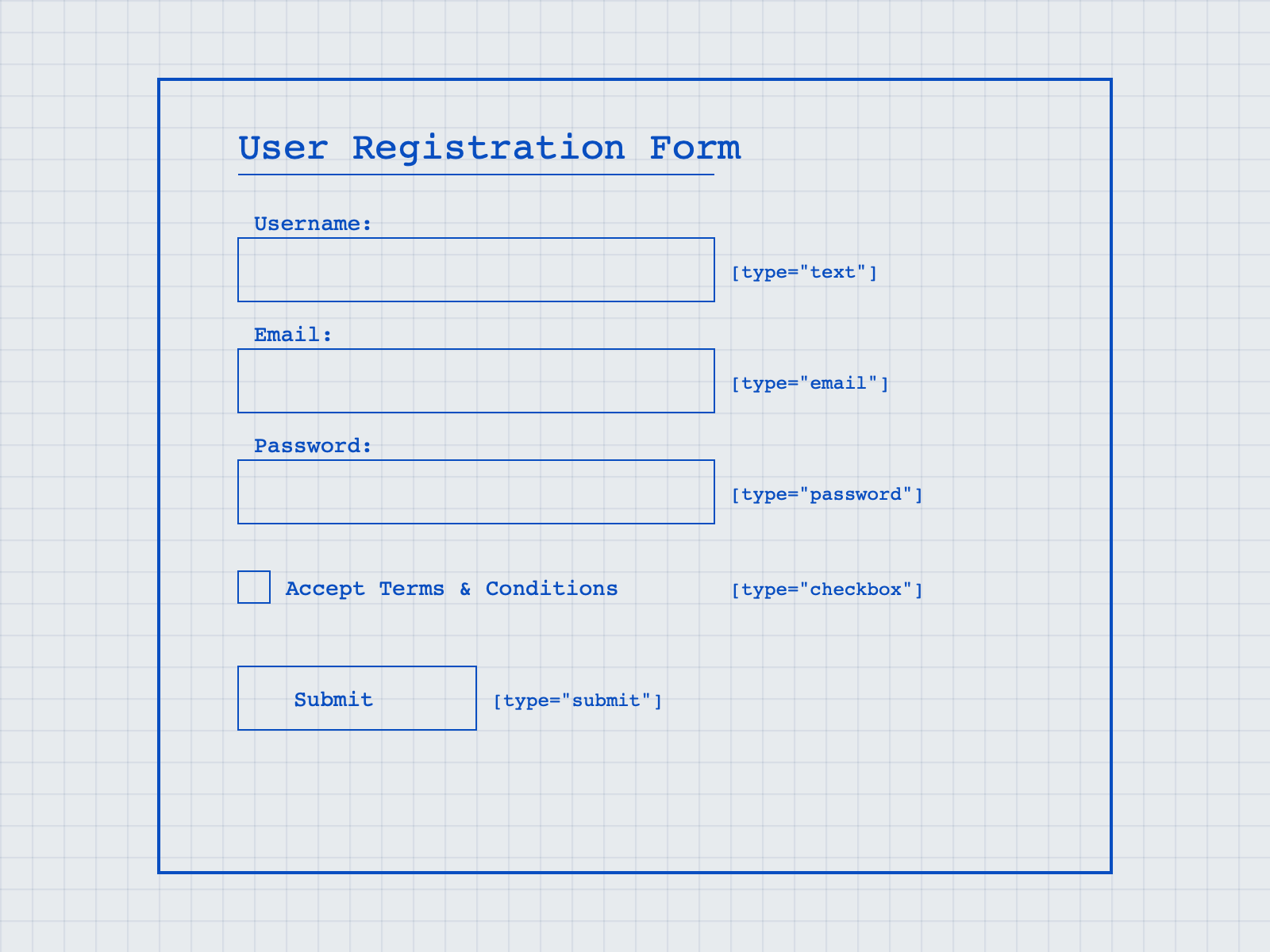

For those of us without that kind of capital, we hacked static web hosting companies servers to do our bidding. It wasn't pretty and, for us, involved a 34 page step-by-step how-to hack DreamHost using FastCGI:

2006 Flashback: This 34 page document was my guide to getting a rails application deployed #oof http://t.co/s7MTm5Zh

— Nathan Colgate (@nathancolgate) September 14, 2012

Source control was handled by Subversion, as git was still in it's infancy. Deployment was handled via FTP. The few gems that were available were statically vendored into your application as plugins. Your database was MySQL.

In short: deploying a Rails application to production was feasible, but required an uphill battle all the way.

2006

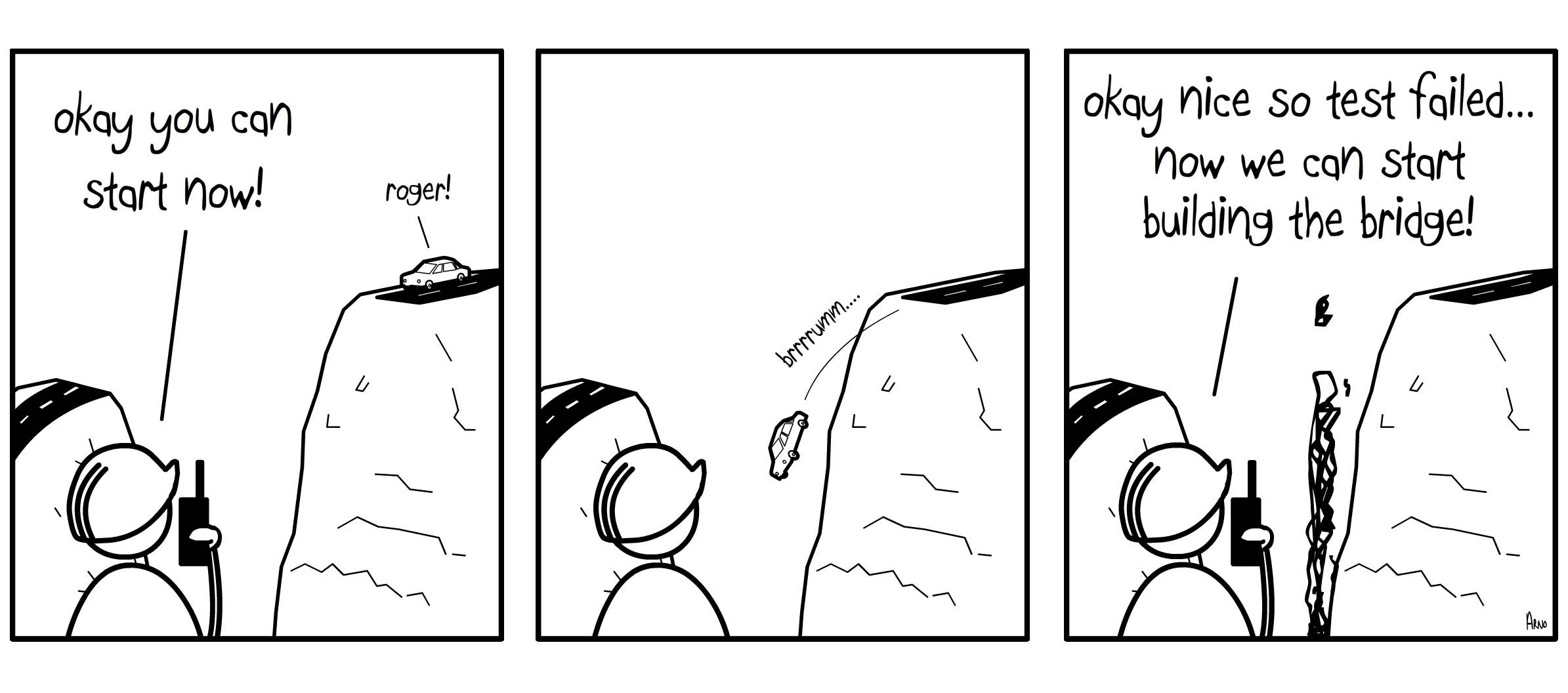

The game started changing when Capistrano came to town. Application deployment configured locally and run via SSH across multiple servers with a single rake task.

Also around this time, I remember a server configuration gem called "chef". But not chef as we know it today. It was a precursor, a much more light weight gem that behaved like Capistrano and configured your server via rake tasks. I clearly remember the first time I learned about chef from a Capistrano how-to. The document stated "By the end of this tutorial you will want to buy Adam Jacob a beer" and it was absolutely right!

Meanwhile VPS companies like SliceHost and RackSpace were popping up to compete with AWS. Also, Postgres started gaining traction as the data store of choice. We could rent a server, configure it within an hour, and have an application running on it within minutes thanks to our friend Capistrano.

"By the end of this tutorial you will want to buy Adam Jacob a beer"

But chef was quickly turned into the great company it is today.

2007

Things are moving fast now. Rails and other web frameworks are taking over the world. Platforms as a Service are rising up to help developers solve the problem of running their applications in production. Most notably: Heroku.

Heroku's Rails buildpack and default support of Postgres made it the clear winner for Rails developers. With it's CI-type integration into git, deployment became as easy as:

$ git push heroku

git push heroku

via

GIPHY

2008—2016

These early years were like riding one giant wave. Changes came often and were sweeping (does anybody remember that Rails originally shipped with

Prototype

?). But by the release of Rails 2 at the end of 2007 the ecosystem had taken root. Ruby libraries (gems) were more readily available, which led to the addition of a

Gemfile

in Rails 3. PaaS providers continued to spring up and specialize. But our internal devops processes here at Brand New Box did not change much until we started running into problems in 2017.

2017

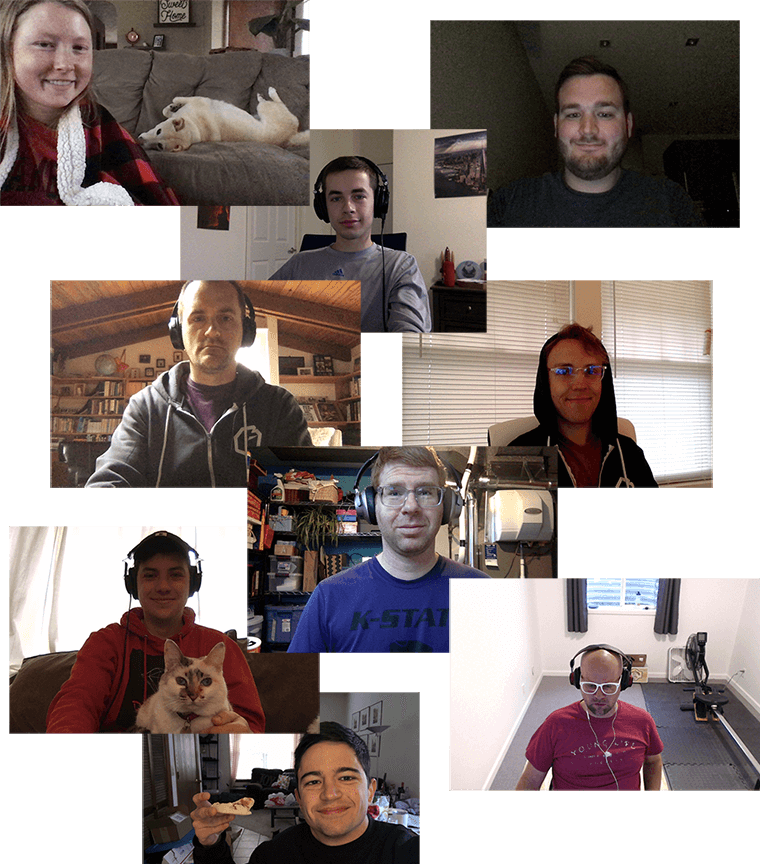

It felt like one morning I woke up and for the first time realized that we had organically grown into a team of eight managing 36 Rails apps in production ranging from Rails 2 (not even joking) to Rails 5. Each application had it's own flavor of configuration. Each server had been built using the most recent configuration specs available at that time. Getting someone started on an existing project took hours of setup and configuration, that sometimes clashed with existing configurations.

We had very few standards in place. It was a mess.

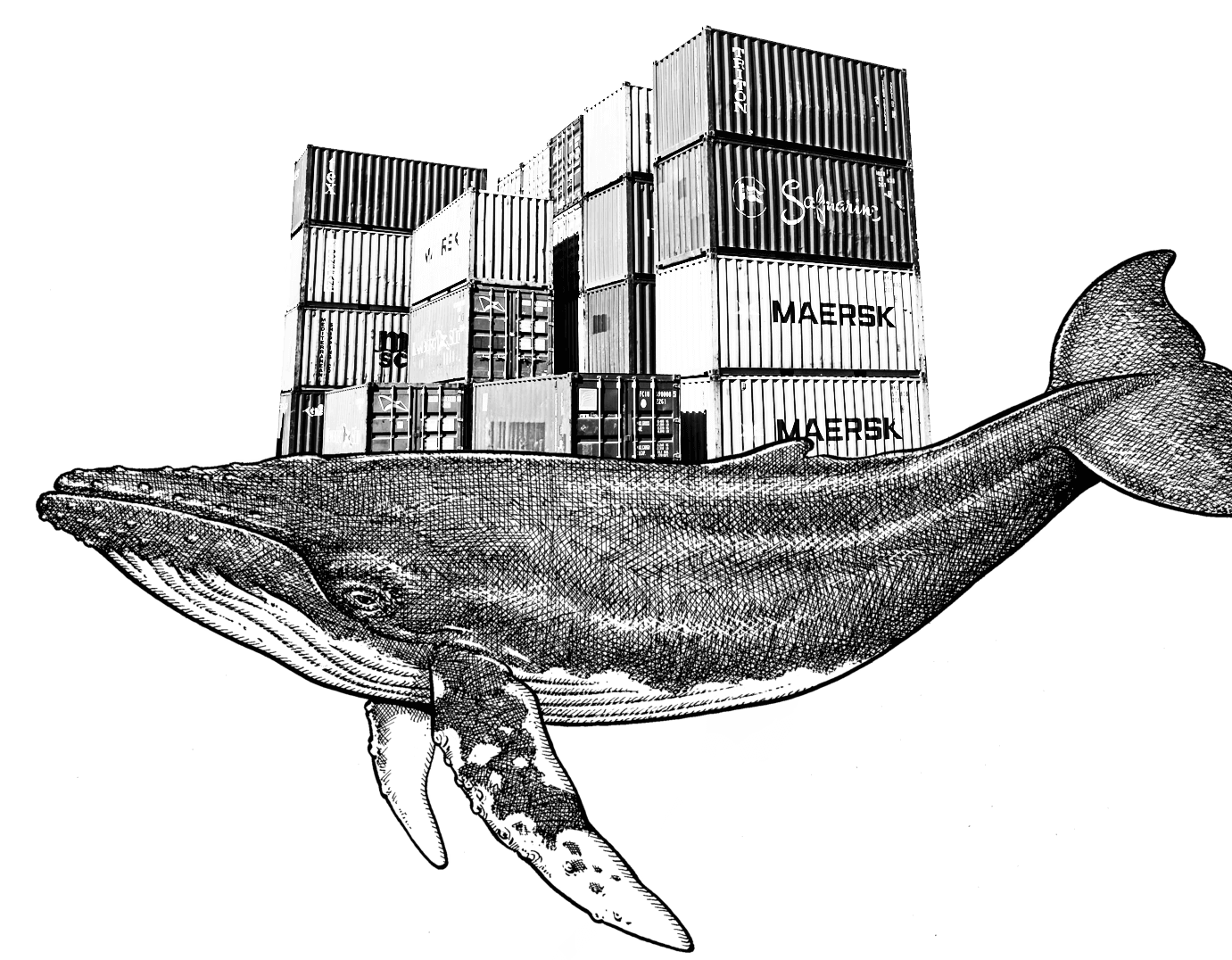

It was clear that if we were going to grow and thrive as a company, we needed to standardize our development and deployment procedure. We needed a way to allow a small team to quickly and consistently work with a large client and project list. We needed... containers.

Check back next month for Part 2 of our DevOps series: Containerization